James Peck should be a name familiar to both REAKTOR heads and hip-hop fans alike. Whether creating ensembles such as the wildly popular – and free – VHS Audio Degradation Suite (still the most downloaded ensemble of all time), or producing music, sample packs and live MPC jams for his Youtube channel as memorecks, Peck takes a granular, technically-focused approach to music that nevertheless maintains a strong human element. Dream Machine feels like the logical culmination of his talents, and fresh from the launch of Dream Machine V2, we sat down with James and Daniel to discuss the ins and outs of their Guinness World Record-breaking project.

This is a pre-recorded clip, but you can check out the current live stream at dreammachine.ai.

Before we get into the specifics of Dream Machine, could we hear a little about who you both are and what you do?

James: I’m James Peck aka memorecks. I’m a producer, songwriter, sound designer, I make Reaktor instruments, a whole bunch of stuff. Most recently I’ve embarked on a project called Dream Machine, which is a 24/7, procedurally-generated radio station.

Dan: I’m Dan, a buddy of James. We met back in high-school. My background is in creative direction, and I run a studio called Space Agency. James showed me the engine he was building in Ableton [Live], and all the cool characters he was doing, and we basically sat down and put together this Dream Machine thing. I help out with the planning, integrations, collateral materials, socials, partnerships and strategy, as well as working with James on new versions of ideas.

Where did the initial idea for Dream Machine come from?

James: It started with the concept of being able to procedurally generate beats. I was making another project a couple years ago called Twitch Plays The Synth – a custom Reaktor synthesizer you could control with Twitch chat commands that ran 24/7, and I had this idea, “what if I ran a 24/7 lofi stream?” Initially we took a bunch of samples and drum loops, put everything in the same key, and randomized them. From there it turned into something that doesn’t use samples at all. We’re generating MIDI notes, generating the mix elements, all the production is happening in a similar fashion. I was also doing 3D modelling at the time, and I was making these little blobby characters.

Dan: Like evil Crazy Bones.

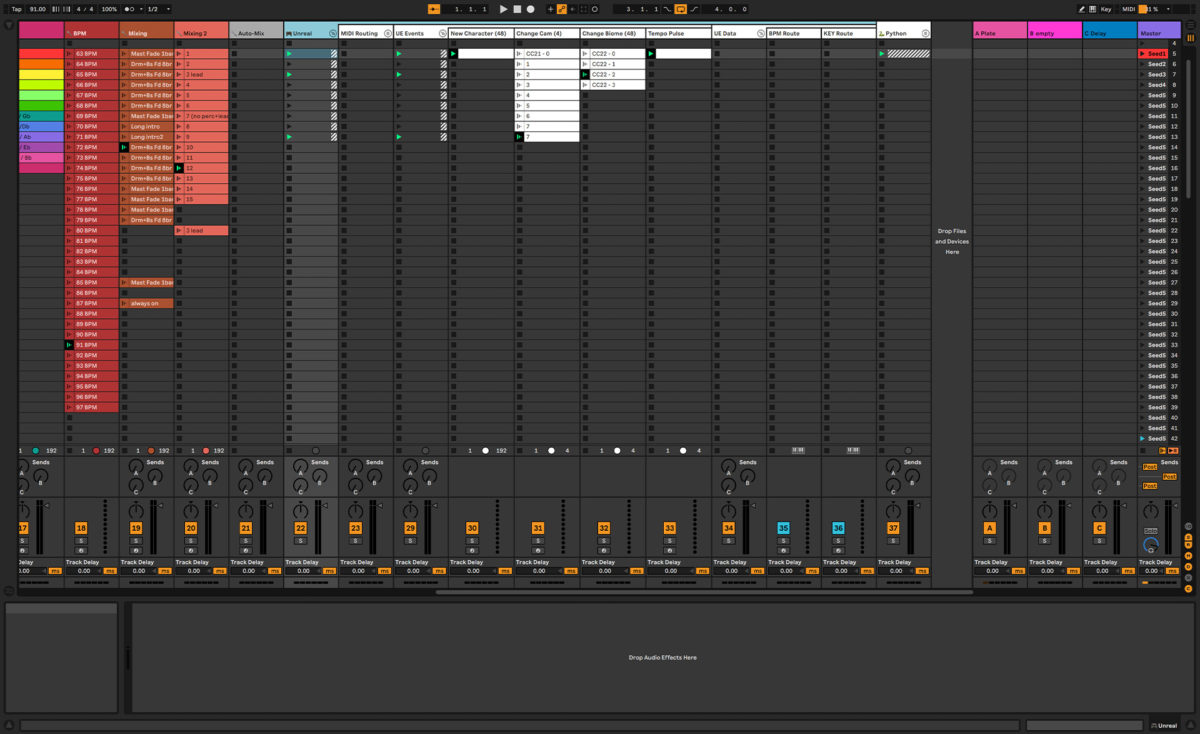

James: I was putting them on Instagram for fun, and Dan’s like, “Yo, you should combine your 3D dudes with your lofi generator thing.” Next thing you know Dream Machine is born. I had to figure out how to do the visuals for the stream as well, so I had to learn Unreal Engine, make Ableton talk to Unreal Engine, and then get that streaming 24/7. It turned into this huge endeavour, it was crazy. Every two to three minutes, there’s a new beat and a new visual. But it all starts in Ableton with a bunch of MIDI clips, Max for Live devices, Native Instruments plugins, all sorts of stuff.

What did getting Dan on board bring to the project?

James: The audio engine and the Unreal Engine, Dan married those two and brought the concept to life. A project like this, you need other people involved, and Dan’s been the other guy planning what to do with it, because there’s a question at the end of the day, when you’ve spent hours and hours on this thing; “well what are you going to do with it?” Dan’s finding creative ways to bring Dream Machine not only to people on the internet where it lives, but to things in real life.

Could you go through how Dream Machine is put together and how each of its constituent parts are talking to each other?

James: Oh hell yeah I can. We have a Mac Mini that runs Ableton, and beside it is a gaming PC with an Nvidia graphics card. Each has an audio interface, a Steinberg UR22 MK2. Ableton sends audio and MIDI from the Mac Mini out from one of the interfaces, and the other interface connected to the PC picks that up. The audio goes into OBS and Unreal Engine, and the MIDI goes to Unreal Engine. So that’s how they’re connected.

In terms of the software that’s running and how it works, it’s essentially just an Ableton Live session. Everything happens in Ableton, from the MIDI clip generation, to the samples that are loaded in, to the plugins that we may or may not be using.

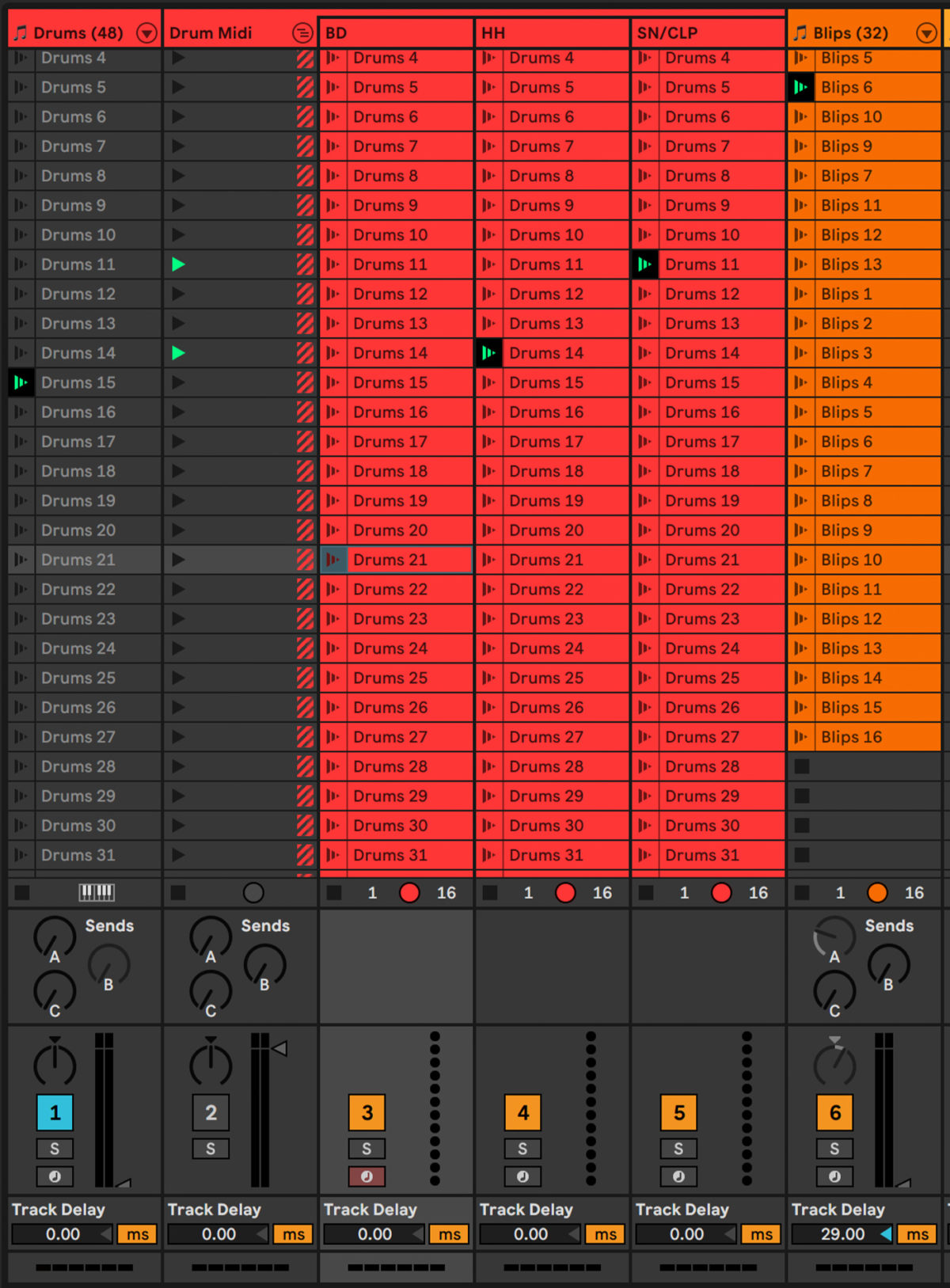

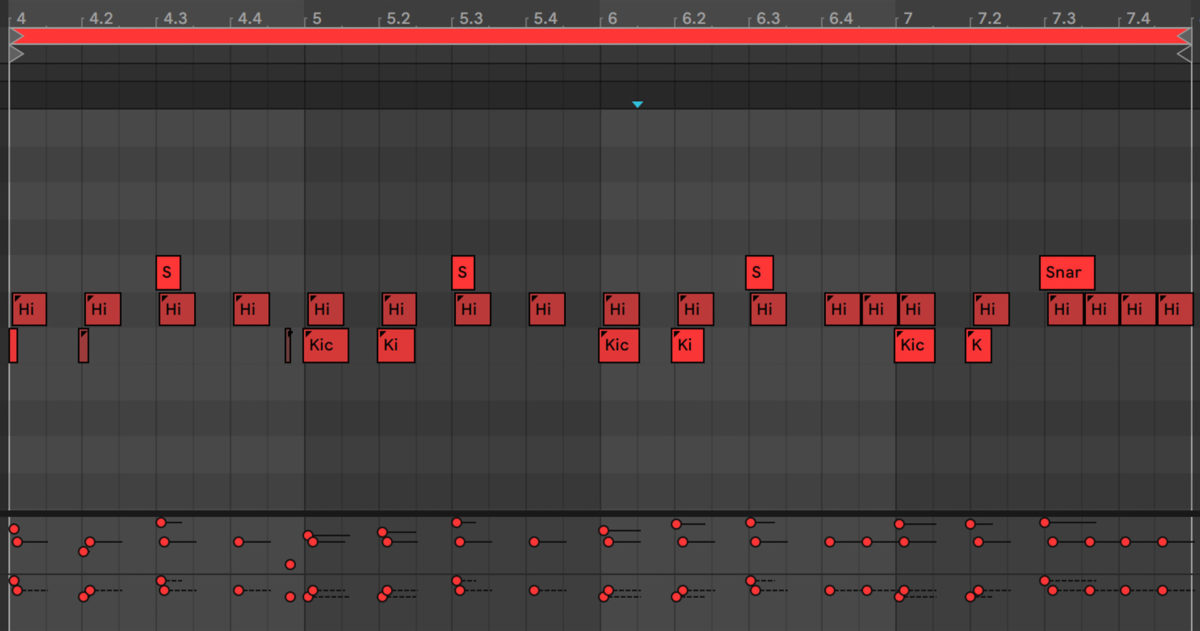

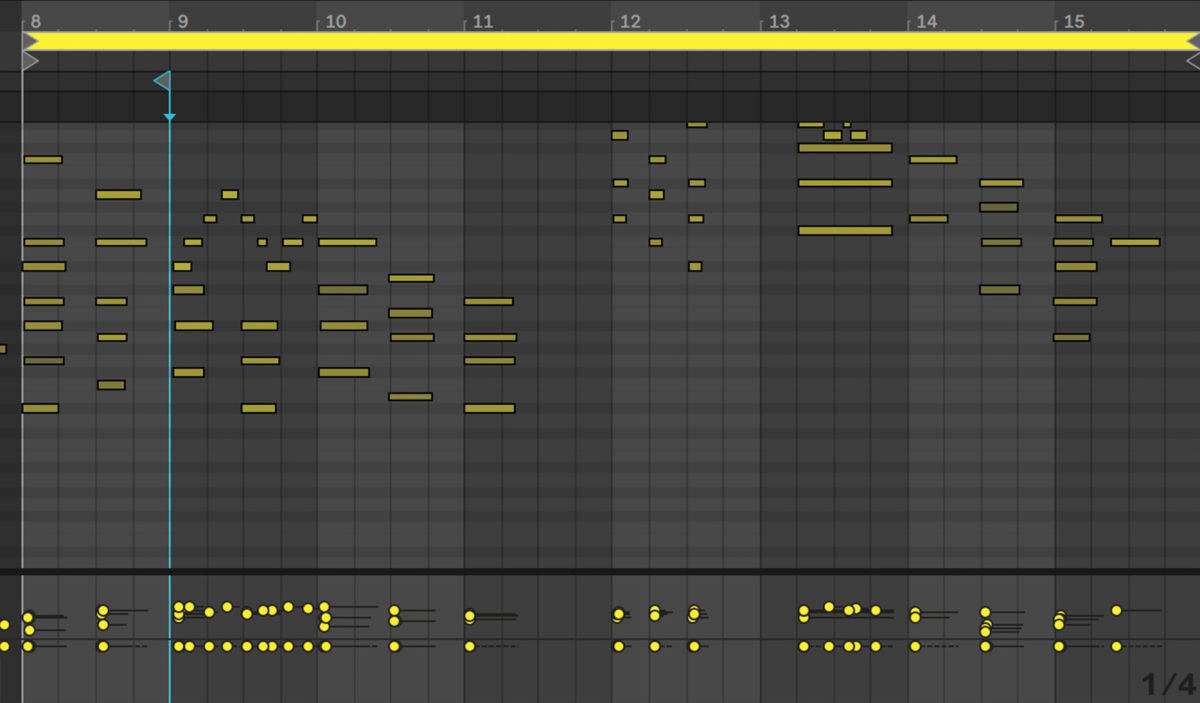

I recorded a hundred or so drum patterns, and choose elements from each to make a new pattern. Every 48 bars a new drum pattern gets created.

Some of the MIDI data that the drum tracks pull from. You can think of this as a menu of sorts.

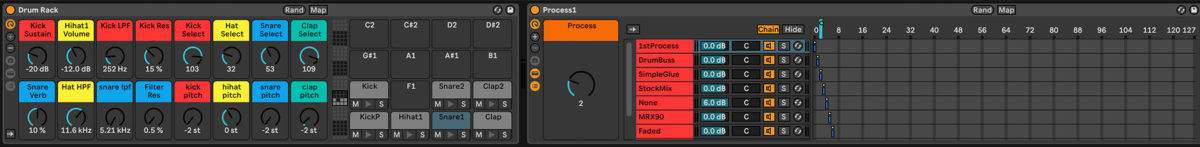

This is the drum rack setup. I’m using a ‘mega-rack’ type kit with up to 127 different samples per cell. Every time a new beat is made it chooses a new set of samples, and tweaks parameters like pitch and filter.

After the MIDI is generated, the drum track goes through an assortment of randomized effects. One of the NI effects I’m using here is Transient Master to get some more punch.

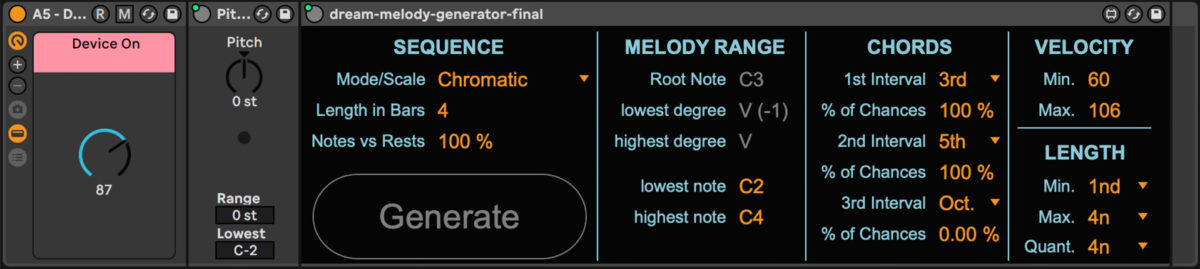

We commissioned a custom Max for Live device that generates randomized MIDI patterns and repeats them when desired. These notes eventually get locked to a specific scale and key.

Another approach to generate chords was to record myself playing hundreds of loops and cycle through them using Follow Actions. By enabling legato mode, you can change which clip is playing while maintaining your position in the timeline. This leads to some interesting melodic ideas and combinations.

After the MIDI is generated for the chord and melody tracks, it goes through randomized processing. Here I’m using Kontakt as a sound source and taking full advantage of the multibank feature to add more variation to each track. Also featured is my custom Reaktor effect VHS Audio Degradation Suite and NI’s VC 76 for compression.

Using various Max for Live plugins I’m able to control each track in the session from any other track. Here I’m using dummy clips and automation to change the BPM, key and scale/mode of the songs. The arrangement of the song is also decided here. Mixing automation allows each track to fade in and out at different points.

In order to let Unreal Engine know what’s going on in the Ableton session, MIDI notes and are CCs routed through a physical MIDI cable. Unreal picks up the MIDI and decides what to do with it.

Other useful tools for procedural music are the Arpeggiator, Random LFO and Velocity modules.

This is what the session looks like.

What’s been the trickiest thing to get right with this system?

James: The hardest thing to generate is rhythm. It’s really easy to generate notes that go well together, but music is all about rhythm. At first it was just jumping through a bunch of different chord clips and would sound slightly aleatoric, meaning it would sound random. There’d be times where your brain would pick up a chord progression or some sort of coherent harmonic structure, but it was lacking a little bit. So the next step was to hire a developer off Fiverr to make a custom Max for Live device that can generate a random pattern and hold it. This lets us randomise things but also have repetition.

I have instances of this device for different parameters to generate notes. There’s also an arpeggiator that’s adding more notes on top of those notes, something that’s shifting the pitch randomly, and something that adds random velocity and note delay. I picked up all these cool little Max for Live devices over the course of making this that are very useful for making this work.

I’m also using Kontakt because the sounds are crazy, and you can load up a multibank with all the stuff you want. You can send a program change to your instance of Kontakt, and it’ll play a different patch you have loaded up. You can also control the number of voices and therefore control the memory limit.

Is Kontakt bouncing between patches you’ve made yourself?

James: I think these are all from the Native stock library. I’m not using any custom Kontakt instruments. I am using tons of my custom Reaktor instruments later in the chain as effects.

Is the audio processing happening within each channel, or do you have busses for that?

James: It’s on the core tracks alone, and then it goes to the master channel, where there’s a whole bunch more stuff happening there. I have my VHS plugin right at the end of the chain, and this will either get turned on or turned off depending on where it gets randomised to. So if the Flutter or Warp wants to change every time there’s a new beat, all I have to do is add that as a macro.

I have another tape effect I made on the master channel, the MRX90. It works in a similar way, where it’s either on or off and there’s a few parameters I’m adjusting on it. Shallow Water is another. This is a recreation of a boutique guitar pedal, and it’s a low-pass gate with pitch modulation in it.The idea behind all these effects is, when you’re producing real music, you’re making these decisions in somewhat of an intentional way, but they’re also somewhat arbitrary and random, and you’re using a guiding principle of, “I’m not going to do some crazy shit, I’m probably going to do something like this.” I’m setting ranges for all these knobs and all these controls, so nothing ever gets too wonky. Essentially, all three of these plugins are doing some sort of pitch modulation to give that extra character or imperfection on to.

What other Native plug-ins are you using?

James: VC 76 is hot fire, Passive EQ, these are classic recreations of very familiar effects. I have all the Native products and they’re very easy to install, they’re very quick to set up, which is great. For bass I have an instance of Kontakt set up with Scarbee Bass, Rickenbacker, J-Bass, and some my own Operator patches. These are just simple, lofi bass sounds and it’s choosing one randomly, and then going through some effects. I dig the [Reaktor] effects because they’re not CPU intensive and have very little latency.

And what’s happening in terms of AI here?

James: There’s a few ways I’ve tried to do it. I’ve tested every AI tool in the book to create stuff, and settled on controlled randomness for now, until we develop a bit more tooling for it. I’ve used Google Magenta, which has a Max for Live plugin that generates clips. It sucks. The most successful one I’ve used is this thing called Splash Pro, which used to be a Roblox app that people could make music with. A lot of the AI stuff is using neural nets that have been trained on existing MIDI, and it can produce some good stuff, but I haven’t found a tool that’s been the one. I’ve been able to make better clips with my random Max for Live device then Splash Pro sometimes. I was using a lot of Splash Pro to generate actual clips, so I would just fill up the timeline with as many clips as possible, and then the Follow Actions would go to those clips. The problem is eventually you’ll run out of clips in Ableton, so my goal is to eventually make a Python device or Max for Live device live-generates AI patterns.

A lot of the time this AI-generated or procedural music, the production is garbage. So I guess I’m trying to take my skill set as a somewhat-decent producer, and focus more on mixing. I think Dream Machines’ strength is that it’s a curated set of sounds. I’ve gone through and selected each sound by hand and set it up so that it has all these nice possibilities to go to.

The visuals are obviously a big part of Dream Machine. How did that come about, and how do they react to the music?

Dan: Originally I don’t even know if we wanted to build the whole thing in Unreal Engine. But then James kept getting so deep into the Unreal Engine stack and all these things plugged together that it went way beyond being audio responsive, where now you can randomly jump between hundreds of cameras each time a beat is generated. Once you master the Unreal Engine you can apply it in a much bigger way.

James: There’s a lot of different things coming from Ableton into Unreal Engine. I have a dummy clip that sends something like, “CC22, message 127,” and Unreal Engine picks that up and says, “I have to change the camera to snow world.” That clip in Ableton has a Follow Action, so now we’re using randomised Follow Actions in Ableton to control events in Unreal Engine, such as camera changes, scene changes, things like that. I need Unreal Engine to know when there’s a new beat, because when there’s a new beat the sky colour is going to change, the sun position is going to change. I find a range where I want the sun to go, and say, “every time this happens, randomise yourself within this range.” And then you stack enough of those up so the sky is changing, the characters are changing, the fog is changing, all that stuff changes when things happen in Ableton. Anything in Unreal Engine you can change with your mouse, you can eventually connect to Ableton with a MIDI signal. I’m still thinking of shit to do with it.

Did you have any tips for anyone who’s seen the Dream Machine project and would like to have a go themselves?

James: I’d say from a practical standpoint, make every chord you can, put it as a clip in Ableton and just set a Follow Action to ‘Random’ or to ‘Other’ and go from there. Build clusters of clips. Dive into Follow Actions, that’s a great place to start. And then conceptually, read the Wikipedia for “Aleatoric Music.” It’s essentially what I’m doing, which is compositions that use randomness as part of the composition. An example of aleatoric music could be a piece of sheet music where you can play from wherever you want, at any given time. I think John Glass had a piece like that. So there’s some cool reading to be done on it. And there’s a lot of startups right now that are exploring actual AI-generation stuff as well. I would just dive into all that.

Check out the stream (plus watch the Dream Machine set-up itself in action) over at dreammachine.ai. And be sure to check out VHS Degradation Suite and James’s other REAKTOR creations (MRX90 in particular is yet more warbly, tape-inspired gooodness). It’s all available for free in the REAKTOR User Library.