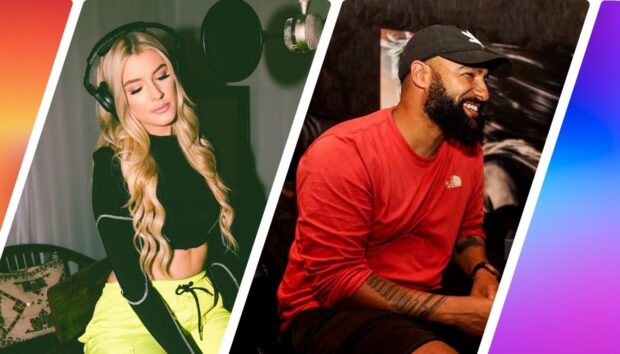

From fronting hybrid, bass-music outfit Jahcoozi, to her other-worldly, ultra-sonic creations as solo artist Perera Elsewhere, the Berlin-based producer Sasha Perera has always been one to push the creative boundaries. Her sophomore LP All of This, released earlier this year is a dark and uniquely produced body of work that uses an eclectic set of instruments, with experimental textures and a mass array of vocal processing. Currently touring the LP, Native Instruments catches up with the producer in her Berlin studio, to discuss how she creates some of these one-off sounds, and transfers them to a live setting using live instrumentation, MASCHINE JAM, KOMPLETE KONTROL, and more.

What’s your current setup?

At the moment, everything goes through Ableton Live – which I sometimes use as a sequencer as well as for effects, Max for Live, to play mid instruments or other VSTs, like a pitchshifter plug-in for example. I also use Ableton and the Komplete Kontrol S49 to play with MIDI instruments and parameters from the Komplete library, like some of the Vintage Organs or Reaktor Monark synths. I also create my own virtual instrument and drum racks with the sounds I used to make my record.

As a band, we all have in-ear monitoring which goes through a MOTU soundcard. I use the MOTU because it has a lot of inputs and also acts as a stand-alone mixer, so if your whole computer crashes, you’d still have some stuff like my main mic and Roland Aira Voice Transformer, my drummer’s e- bass drum kick or my bass player’s bass running through there.

Do you have two setups, one with a band and one without?

Yes. I am definitely trying to focus on the band for this project, as I love how it gels together, being able to play lots of unquantized stuff. But sometimes I have to play smaller solo sets, like recently in Los Angeles or London. It makes sense to have a smaller set up at times and it’s fun to try stuff out, that I can then take back to the band.

For example, after playing in Liverpool solo, I started using the LEAP Motion sensor and the Maschine Jam, which I use it to switch between the different instrument racks for different tracks and also to trigger audio files using the Ableton Simpler for example. I can drag a file of my own vocal -or any audio file- into the Simpler and it chops it into different samples with which you can change the tuning or different parameters of with the effects. I use a special template that allows you to control Ableton using the Maschine Jam as well as assigning MIDI commands separately according to what I need to do. I love the Maschine Jam for jamming with other people and for composing on the fly. It’s cool for live too, but I actually use it more off stage when producing or in a jam session with MIDI sync. It offers endless possibilities especially when jamming in the session window of Ableton and for live looping.

So how do you play live?

When we (me, my drummer Toto Wolf, and my bass player Oren Gerlitz) are playing with no click or sequenced playback, I will often use the keyboard to play one of the instrument racks. For a song like ‘Weary’ my instrument rack consists of samples of pitched and processed clarinets, bits I played on a Buchla and a Polysynth, some stretched spooky vocal pad that I made from my own voice – and other sounds which I used to produce the song for the album. I assign those sounds to the lower part of the keyboard for my left hand, whilst on the upper part of the keyboard I have a tweaked vintage organ / synth VST from the Komplete Library to play the chords and harmonies of the song with my right hand.

I sing over the main microphone which goes through my Ableton for effects and live vocal pitching VST. I have a Logidy MIDI over USB foot controller pedal which I use to trigger a plugin called Manipulator. I’ve assigned it to three pedals where I can trigger +5 , +5 and -12 tones as options for live pitching of my vocal. This pitched vocal is added on top of the sum of the main vocal. I also have a second microphone going through a Roland Aira Voice Transforma which is a standalone piece of hardware that offers some pretty cool pitching options and crazy reverb and distortion possibilities. Again if the computer crashes I have a lot of vocal effect options as it’s a standalone thing.

Towards the end of the show with ‘Light Bulb’ I love playing the spooky suicidal piano using the Maverick. It’s like a goose bump tsunami. I guess it’s cool for people to finally hear a thick and dense actual piano sound after you’ve played so many of your fucked up weird sounds on the same keyboard. But there are also songs where we all play on top of a click to allow for sequenced elements and stems to run as a playback. It’s hard to know what works until we try it. Some tracks feel static when stuff runs on the sequencer, but other stuff works amazingly. So we just try stuff and make other versions until we find what works.

My drummer sometimes plays an e-bass drum kick pedal (e.g. to play an 808 for example ) and uses an e-drum module to play the different bass drums , snares and sounds from my productions. On other tracks he plays the natural bass drum. Sometimes he plays with his hands, and sometimes with mallets, he also does some pretty psycho sounding scraping on the cymbals and stuff! It’s great to work with a drummer who can do so much but also realises he doesn’t have to do it all the time.

My bass player plays bass guitar as well as a synth bass on a couple of tracks. He can also trigger some of the sounds I made for the album on a McMillen 12-step USB MIDI foot control, because sometimes when I’m singing and playing bass and treble chords, or playing trumpet and using the pitchers, I simply don’t have enough hands and he can add other sounds for transitions. He also uses the pitcher-plugin Manipulator, also with a pedal – but it’s

programmed to be on one note – it’s more of a vocoder and sounds more like a classic pop autotune thing. You can really play with the quality of the voice – and he does some really nice, dancehall-like autotune pop vocals over a really dystopian track. It becomes like a jilted gospel duet if we sing together.

When you’re playing unquantized, who starts, you or the rhythm section?

It depends. We’ll talk about it beforehand and we try stuff out obviously. Sometimes we are even looser than that. The track will end and we’ll carry on playing and merge into new sounds of a new track. It’s a balance between relaying what I’ve done on the album, but also bringing new energy and creativity, and of course spontaneity is the liveness. I think they’re great albums, but why would you need to hear them in exactly the same format? I don’t think they work like that. It would feel super constructed if every bar was the same. I also think it is a listening album and making versions which grab people in the live environment is a cool challenge. You want them to feel you and risk and even impulsiveness is important for that.

How do you incorporate the trumpet into your performance?

As I mentioned above, the Logidy MIDI pedal is used to trigger the Manipulator VST which allows me to do live-realtime pitching of my voice without latency. I play my trumpet over the same main microphone and can then do live -realtime pitching of my trumpet in three different octave tones. It ends up super psychedelic and if I turn my Roland Aira Voice Transforma microphone up too, then I’m mixing both the pitching and the crazy reverbs live. It adds so much depth to this whole trumpet thing. I don’t really advertise the fact I play trumpet so much, because I compose music and sing and it’s a lot for people to focus on. Maybe I don’t wanna ‘trumpet type-cast’ myself, but when I get the trumpet it out they are just super shocked. Somehow it’s the ultimate vibe bringer.

What in-ear monitoring do you use?

I use these great headphones made by HearSafe. They slot into an ear-fitted, ear-plug that were made from a mould of my ear. The good thing about in-ear is that you can have rhythmic clicks so that band can all start together with sequenced tracks if the need be. That can feel dramatic in a good way. In-ear is also amazing for melodic click, especially with bass / club music or music that is more rhythmic rather than melodic, as the melody will often come in ages later, and as a vocalist you have no idea how to come in if you don’t hear the exact tuning of the bass drum. With In-Ear, you can have a melodic pad which only you can hear on your headphones, so that you sing in perfect key with the bass drum and when the melodics do later come in it will all fit. So If you only wanna start with the rhythm section and come later with the melodics, in-ear is perfect for that.

You also get a cleaner sound, because you’re not using the monitors so there is no sound leakage over the mic or feedback over the monitors. You don’t play as loud, because you’re not pushing the monitors to be louder. Each of us can have our very own in-ear mix which are saved as settings.

When you were making the record, did you have the live aspect in mind?

No. I’ve done some really stupid things, like not bothering to tune a guitar before playing and then just recording it. And then when I try to play other synths or MIDI instruments over it, I realise I have to use the pitchwheel to modulate between notes, because I’ve not done it in a particular key. Instead of re-recording the guitar, I just produce the whole song like that. The problems only arise when my bass player asks me what key the song is in, and I realise that I have to go back and either transpose or repitch every tiny little sound I made in order to use any of them with my band. Slowly I am learning from my errors.

Having said that, the best stuff happens unintentionally. I’m not a singer-songwriter that just sits down, writes and records. Sometimes I only know what the first two bits are, and I record. Then I manipulate my vocals and make double parts and backings by manipulating the original file. The songwriting and composition occurs partly during the editing. I just hear something I like within my vocal recording that was unintentional or which wasn’t meant to be the hook and decide that part is the magic. That’s what I love about production, that you create this whole world and atmosphere, not just one part of it. ‘Bizarre’, the first song I released as Perera Elsewhere, the hookline was cut from a mistake. Recording it and then listening to it and thinking – ‘whoaah that extra bit I did unintentionally is the most super special. That’s the magic’ .

I usually fully produce the song first, often it is even released before I take it to the band and try and put it on the stage. For the second record, the song ‘Big Heart’ – was already the band version we played live with samples from my ‘original’ version. I had a totally different version I made in my living room years ago. I had started rehearsing that track with the band before I had a version I could called finished, and then I recorded us in rehearsal and used the drums in that. I muted the bass guitar and I programmed synth bass instead and used some of the vocal takes – playing live the samples I’d produced before. So sometimes it becomes a hybrid, and there is a lengthy journey involved in song making with different versions till I find the right one, whereas others just work in one take instantly, like with ‘All of This’, or ‘Light Bulb’ for example. I never really know how things are going to pan out. That is what makes my job so much fun and frustrating all at once.

photo credits: Markus Werner

Listen to Perera Elsewhere’s contribution to KOMPLETE SKETCHES here.

Watch Perera Elsewhere perform songs in her studio over for Electronic Beats below.